Unicode Text Conversion Guide: Decoding UTF-8 and Special Characters

In our interconnected digital world, text is the fundamental medium of communication. From emails and websites to documents and databases, characters are constantly being transmitted, stored, and displayed. Yet, often, this seemingly simple process can go awry, leading to perplexing strings of symbols like "ü," "Ã," or even unreadable gibberish instead of the intended text. This phenomenon, known as "mojibake," or garbled text, is a common frustration for developers, content creators, and everyday users alike. Imagine trying to search for "デッド アイランド 2 攻略" (Dead Island 2 strategy) only for your browser to display it as a confusing jumble of question marks or boxes. Understanding Unicode and its primary encoding, UTF-8, is your key to unlocking clear communication and banishing these cryptic characters for good.

This comprehensive guide will demystify Unicode, delve into the intricacies of UTF-8, explain why special characters sometimes fail to display correctly, and equip you with practical strategies for conversion and decoding. By mastering these concepts, you'll ensure your text—whether it's an accented letter, an emoji, or characters from any of the world's languages—is always represented faithfully.

Understanding the Universal Language: What is Unicode?

Before Unicode, the digital landscape was a linguistic Tower of Babel. Different "code pages" existed for various languages, each mapping a limited set of numbers to characters. This meant that a character represented by a certain number in one code page might be a completely different character (or nothing at all) in another. This led to endless compatibility issues, especially when exchanging text across different systems or regions.

Enter Unicode. Conceived as a universal character set, Unicode provides a unique number, called a code point, for every character in every language, script, and symbol imaginable. From the Latin alphabet (A, B, C) to Cyrillic (Ð, Б, Ð’), Arabic (Ø£, ب, ت), Chinese (ä¸, 二, 三), emojis (😂, 👍), and even specialized symbols, Unicode assigns a distinct identity to each. This ambitious standard aims to encompass all known characters, historical and modern, ensuring that any text, regardless of its origin, can be represented consistently.

The Unicode standard doesn't dictate how these numbers are stored as bytes in a computer's memory; that's where character encodings come in. It simply provides the definitive mapping from character to numerical code point. This distinction is crucial for understanding why we often talk about "Unicode" and "UTF-8" in the same breath but as separate concepts.

Decoding UTF-8: The Web's Dominant Encoding

While Unicode provides the universal map of characters, an encoding is the method used to translate these Unicode code points into sequences of bytes that computers can store and process. Many encodings exist (UTF-16, UTF-32, ISO-8859-1, etc.), but one stands head and shoulders above the rest in terms of prevalence and importance: UTF-8.

UTF-8 (Unicode Transformation Format - 8-bit) is a variable-width encoding that has become the de facto standard for the internet. Here's why it's so dominant:

- Variable-Width Efficiency: UTF-8 represents common ASCII characters (like those used in English) using just one byte, making it incredibly efficient for English text and fully backward compatible with ASCII. This means older systems or files encoded in ASCII can be read by UTF-8 decoders without issue.

- Global Reach: For characters outside the ASCII range (like é, ñ, ä, or complex scripts such as Japanese characters found in "デッド アイランド 2 攻略"), UTF-8 uses two, three, or four bytes. This allows it to represent the entire Unicode character set, supporting virtually every language on the planet.

- Byte Order Independence: Unlike some other encodings (like UTF-16), UTF-8 does not suffer from byte order issues, simplifying cross-platform compatibility.

The ingenious design of UTF-8 means that it is both compact for common Latin-based text and expansive enough for global linguistic needs. This combination has made it the encoding of choice for web pages, operating systems, databases, and nearly every digital text standard.

The Menace of Mojibake: When Special Characters Go Wrong

If Unicode and UTF-8 are so well-designed, why do we still encounter garbled text? The answer almost always lies in a mismatch between the encoding used to save or transmit text and the encoding used to interpret or display it. This results in "mojibake," or characters that appear incorrect, jumbled, or replaced by placeholder symbols. Common examples include:

- "ü" instead of "ü": This classic mojibake often occurs when UTF-8 encoded text containing a two-byte character (like 'ü') is mistakenly interpreted as ISO-8859-1 or a similar single-byte encoding. Each byte is then displayed as a separate, incorrect character.

- "€" instead of "€": Similar to the above, the Euro symbol, often a three-byte character in UTF-8, can turn into multiple incorrect symbols if misread.

- Question Marks or Boxes: When a system genuinely doesn't have the necessary font to display a character, or if the encoding is so mangled that the system can't make sense of it, you might see '?' or empty boxes replacing the characters, such as when "デッド アイランド 2 攻略" is displayed as '?????????'.

Common Causes of Mojibake:

- Missing or Incorrect Character Set Declaration: Web browsers or email clients need to be told what encoding a document uses. If this information is missing (e.g., no

<meta charset="utf-8">in HTML) or incorrect, the browser will guess, often wrongly. - Saving Files with the Wrong Encoding: A text file saved as ANSI (a legacy encoding) but opened expecting UTF-8, or vice versa, will inevitably lead to garbled text.

- Database Encoding Mismatches: If data is stored in a database with one character set (e.g., Latin1) and retrieved by an application expecting another (e.g., UTF-8), conversion errors are rampant.

- Copy-Pasting Between Applications: Some applications handle clipboard data differently, and pasting text from an application with one internal encoding to another with a different default can corrupt characters.

- Legacy System Integration: Older systems built before UTF-8 was widespread often use fixed-width or locale-specific encodings, creating hurdles when integrating with modern, globalized applications.

The good news is that in most cases, the underlying data isn't truly corrupted; it's merely being misinterpreted. With the right tools and techniques, you can often convert garbled text back to its original, legible form.

Practical Strategies for Unicode Conversion and Decoding

Successfully converting and decoding Unicode text, especially when dealing with garbled characters, requires a systematic approach. Here are actionable tips and strategies:

Identifying the Problematic Encoding

The first step is always to identify what the text *should* be, and then what encoding it *is currently being interpreted as*. This can be challenging but crucial.

- Browser Developer Tools: Most modern browsers allow you to inspect the character encoding of a web page (often in the "Network" or "Security" tab of developer tools, or sometimes via a "Text Encoding" option in the view menu).

- Text Editors: Advanced text editors like VS Code, Sublime Text, Notepad++, or Atom can often detect and display the encoding of an opened file. They also allow you to save files with a specific encoding.

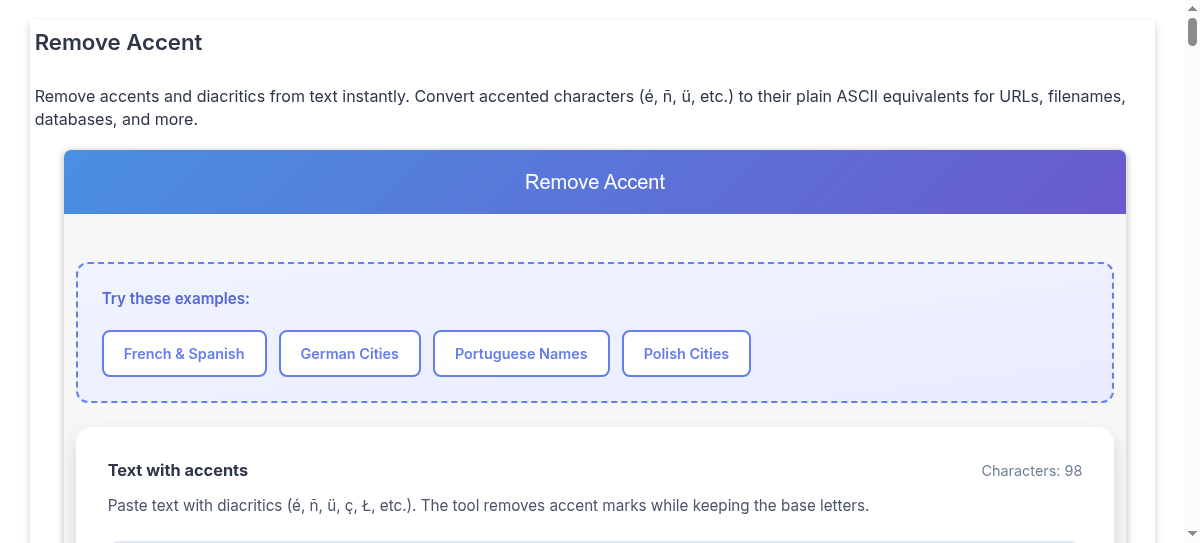

- Online Detectors: There are many Best Online Tools for Unicode Encoding and Text Translation Explained that can help you paste in garbled text and attempt to guess the original encoding, or perform conversions directly.

Converting and Fixing Garbled Text

Once you have an idea of the original encoding (or at least know that it's supposed to be UTF-8), you can apply conversion techniques:

- Explicit Character Set Declarations (Web): For HTML pages, always include

<meta charset="utf-8">as early as possible within the<head>section. Additionally, ensure your web server sends the correctContent-Type: text/html; charset=utf-8HTTP header. - Save Files as UTF-8: When creating or editing text files, especially source code, configuration files, or data files, always save them with UTF-8 encoding (without BOM). The "BOM" (Byte Order Mark) can sometimes cause issues in certain environments, though it's less common with modern systems.

- Programming Language Functions: Most programming languages provide robust functions for handling character encodings.

- In Python: Strings are Unicode by default. Use

my_string.encode('utf-8')to convert a string into bytes using UTF-8, andmy_bytes.decode('utf-8')to convert bytes back into a string. If you suspect mojibake, you might try decoding with a different encoding (e.g.,my_bytes.decode('latin-1')) to see if the garbled text becomes legible before re-encoding to UTF-8. - In PHP: Functions like

mb_convert_encoding()are essential for converting strings between various encodings. Always specify input and output encodings. - In JavaScript: The web is largely UTF-8 native. For URL encoding/decoding, use

encodeURIComponent()anddecodeURIComponent().

- In Python: Strings are Unicode by default. Use

- Database Configuration: Ensure your database, tables, and columns are configured to use a UTF-8 character set (e.g.,

utf8mb4in MySQL for full emoji support). Also, verify that your client connection to the database specifies UTF-8. - Online Unicode Converters: For quick fixes or to experiment with different decoding attempts, various online tools can translate between encodings. These are particularly useful for small snippets of text that are displaying incorrectly. For advanced techniques and more online tools, refer to our article on Mastering Unicode Decoding: Convert Garbled Text and Special Characters.

Tips for Preventing Future Issues:

- Standardize on UTF-8: Make UTF-8 your default encoding for everything: operating systems, text editors, databases, web servers, and client-side applications. Consistency is key.

- Explicitly Declare Encoding: Never assume encoding. Always explicitly declare it in your code, HTTP headers, and file metadata.

- Validate Input: If you're receiving text from external sources, consider validating its encoding upon receipt or converting it to UTF-8 immediately to prevent propagation of issues.

- Test with Diverse Characters: Regularly test your applications with a wide range of Unicode characters, including common special characters, accented letters, and non-Latin scripts (like the Japanese characters in "デッド アイランド 2 攻略") to catch encoding problems early.

Conclusion

Unicode and UTF-8 are the unsung heroes of our global digital ecosystem, enabling seamless communication across languages and platforms. While the occasional appearance of garbled text can be frustrating, understanding the principles behind character sets and encodings empowers you to diagnose and resolve these issues effectively. By consistently using UTF-8, explicitly declaring your encodings, and leveraging the right conversion tools and techniques, you can ensure that your text—from a simple email to a complex web application—is always displayed correctly, fostering clear communication in an increasingly diverse digital world. Mastering Unicode text conversion isn't just a technical skill; it's a fundamental aspect of creating truly universal and user-friendly digital experiences.